Z.ai has released GLM-5.1, an open-source AI model designed for long-horizon software engineering tasks. Early reports indicate the model leads the SWE-Bench Pro benchmark, highlighting the rapid evolution of agentic AI systems capable of sustained coding workflows.

In a notable development for artificial intelligence applied to software engineering, Beijing-based Z.ai (formerly Zhipu AI) has introduced GLM-5.1, an open-source large language model designed for sustained agentic coding workflows. Released on April 8, 2026 under the permissive MIT License, the model has quickly drawn attention for its performance on one of the most demanding software engineering benchmarks.

According to early reports, GLM-5.1 achieved a 58.4 score on SWE-Bench Pro, edging past several frontier models including GPT-5.4 (57.7) and Claude Opus 4.6 (57.3).

While the numerical margin is modest, the result is significant because SWE-Bench Pro measures sustained engineering capability rather than short code generation tasks.

A Benchmark Focused on Real-World Engineering

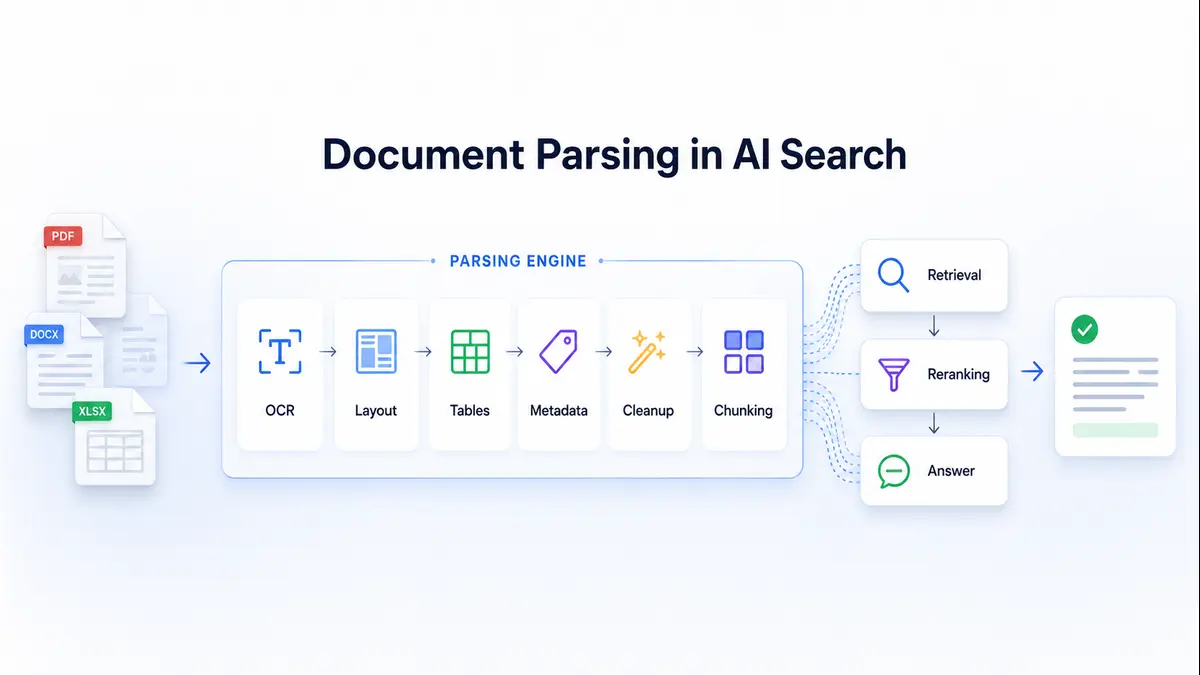

SWE-Bench Pro is widely considered one of the toughest evaluations for coding models. Instead of asking models to produce isolated snippets of code, the benchmark presents real GitHub issues from large repositories and requires the model to:

- understand the repository context

- diagnose the issue

- propose fixes

- iterate through debugging cycles

- produce a working patch

The evaluation runs within very large context windows (up to 200,000 tokens) and may require hundreds or thousands of tool interactions during the process.

This design mirrors how professional software engineering actually unfolds—making the benchmark particularly relevant for evaluating agentic AI systems capable of long, structured problem-solving sessions.

From GLM-5 to GLM-5.1

GLM-5.1 builds on Z.ai’s earlier GLM-5 model, a large Mixture-of-Experts system released earlier in 2026. The GLM-5 architecture reportedly contains hundreds of billions of parameters, with only a subset activated for each token during inference—a design that improves efficiency while maintaining large model capacity.

The new version was refined specifically for long-running agentic execution, where models must maintain reasoning consistency over extended interactions rather than responding to single prompts.

Z.ai states that GLM-5.1 supports context windows exceeding 200,000 tokens and includes an optional “thinking” mode designed to encourage structured reasoning before generating final answers.

The Shift Toward Long-Horizon AI Coding

Earlier generations of coding models often excelled at short prompts or isolated functions, but struggled to maintain coherence across prolonged tasks.

GLM-5.1 is designed around a different objective: execution endurance.

According to Z.ai’s technical notes, the model demonstrates a “staircase” improvement pattern during long sessions—where incremental optimizations accumulate before producing significant structural improvements in the solution.

This behavior reflects a broader shift in AI development: moving from vibe-coding assistants toward autonomous engineering collaborators capable of maintaining strategy over long workflows.

Evidence from Extended Engineering Tasks

Z.ai reports several demonstrations illustrating this sustained capability.

In one experiment involving vector database optimization written in Rust, the model reportedly executed more than 6,000 tool calls across hundreds of iterations, gradually refining indexing and compression techniques. The final system increased query throughput dramatically while preserving retrieval accuracy.

In another evaluation using KernelBench Level 3, which measures optimization of machine-learning GPU kernels, GLM-5.1 delivered a 3.6× geometric mean speed improvement over baseline PyTorch implementations.

These experiments highlight the type of workloads agentic systems are increasingly expected to handle:

complex optimization tasks requiring planning, iteration, and tool-assisted execution.

Open Weights and Deployment Flexibility

One of the most notable aspects of GLM-5.1 is its open-source release under the MIT License, making both model weights and tooling broadly accessible to developers.

The model can reportedly be deployed through:

- vLLM

- SGLang

- Z.ai’s managed API platform

It also integrates with several existing agent frameworks used for autonomous coding systems.

Open access to a model capable of sustained agentic execution could accelerate experimentation across developer communities, particularly for teams exploring autonomous software development pipelines.

Implications for the AI Engineering Landscape

The release of GLM-5.1 reflects a broader shift in the trajectory of AI development. In recent months, researchers have been exploring architectures that move beyond simple next-token prediction toward systems capable of reasoning and sustained execution. One example is Joint Embedding Predictive Architecture (JEPA), which focuses on predictive representation learning rather than generative token prediction.

Rather than focusing purely on short benchmark tasks, the industry is increasingly measuring models by their ability to remain useful across extended engineering sessions, where planning, debugging, and refinement unfold over many iterations.

For organizations facing persistent shortages of experienced software engineers, models capable of maintaining coherent multi-hour workflows may eventually change how development teams operate.

At the same time, long-running agents still require careful orchestration, sandboxing, and human oversight to prevent compounding errors or unintended system changes.

A New Baseline for Agentic Coding

GLM-5.1 does not represent the final stage of agentic software engineering, but it does establish an important new reference point.

By combining strong benchmark results with openly available weights, the model demonstrates that long-horizon engineering agents are becoming a practical research and development direction, rather than a purely theoretical goal.

The next phase of the field will likely focus on how these systems integrate into real development environments—where reliability, safety, and sustained reasoning matter as much as raw model capability.