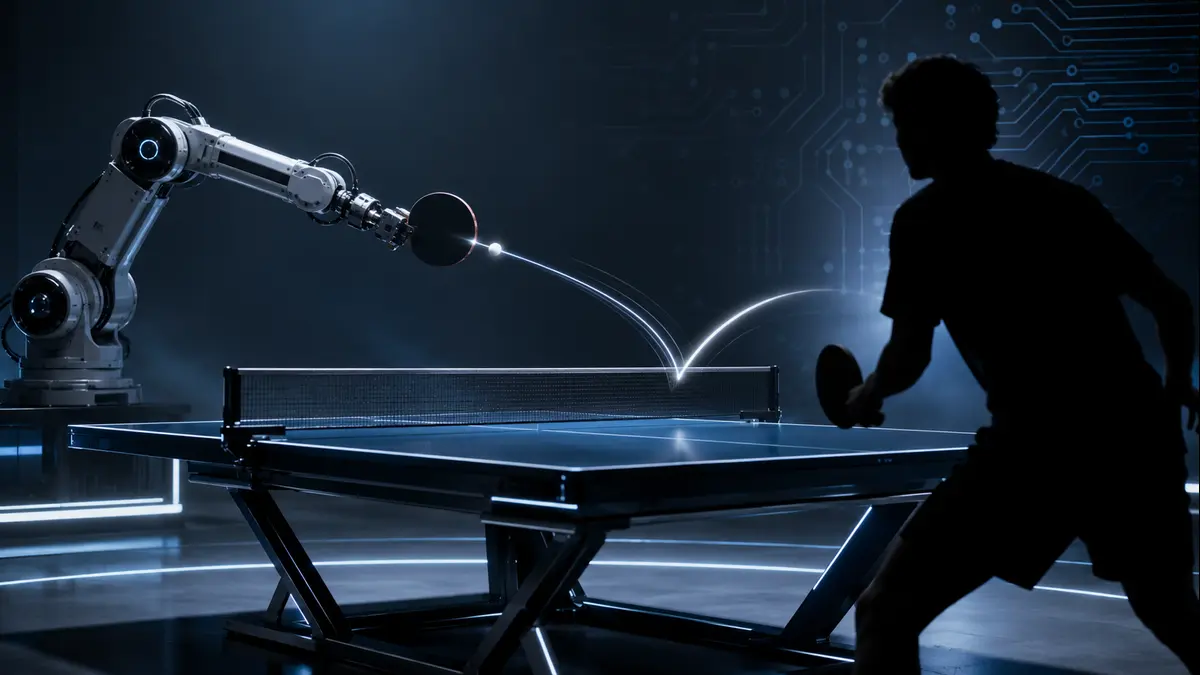

Sony AI’s Ace marks a major step in artificial intelligence: moving from digital games into real-world physical intelligence. By combining perception, reinforcement learning, low-latency control, and robotics, Ace shows why the next frontier of AI may be machines that can act safely and intelligently in dynamic physical environments.

In the quiet hum of a Tokyo lab, a robotic arm whips across a table, returning a blistering serve laced with vicious spin. Its human opponent, an elite athlete with years of honed instinct, lunges but falls short. This isn’t science fiction or a carefully staged demo. It’s Sony AI’s Ace, a system that has stepped boldly into the chaotic arena of real-world physical competition.

For decades, artificial intelligence has dazzled us in pristine digital domains. Ace changes the conversation. It doesn’t just think faster than humans. It perceives, decides, and acts in the messy, unpredictable physical world where latency, friction, and human whims collide in milliseconds.

AI’s Superhuman Era Was Mostly Digital

Recall the milestones that once felt revolutionary. IBM’s Deep Blue dismantled Garry Kasparov at chess in 1997. Two decades later, DeepMind’s AlphaGo mastered the intuitive complexities of Go, defeating world champions through a blend of deep neural networks and reinforcement learning. These triumphs unfolded in environments with perfect information, discrete rules, and instantaneous state updates. No gravity. No sensor noise. No aching muscles or split-second hardware delays.

AI excelled at “thinking fast” because the battlefield was abstract and forgiving. Victory came from superior search, pattern recognition, and strategic depth within silicon confines. Physical intelligence, however, demands something far more demanding: seamless integration of high-speed sensing, real-time prediction, and precise actuation under constant uncertainty. Ace marks a pivotal shift. It confronts the full stack of embodied challenges that digital games conveniently sidestep.

Why Table Tennis Is a Brutal Robotics Challenge

Table tennis might appear deceptively simple to the casual observer—a small ball, a net, quick rallies. In reality, it represents one of the most unforgiving tests for autonomous systems. Professional-level shots can approach 20 m/s, while high-spin shots create extreme trajectory changes that push both human and robotic players to the limits of sensing and motor control.

Human players rely on intuition forged through thousands of hours, subtle body cues, and reaction times around 230 milliseconds for elite athletes. Robots face harsher constraints. They must track the ball’s 3D position with millimeter accuracy, estimate angular velocity in real time, predict its post-bounce trajectory accounting for air drag and Magnus effect, then command a multi-joint arm to intercept and return with controlled racket angle and velocity—all while adapting to an adversarial opponent who actively exploits weaknesses.

Any latency compounds rapidly. A 50-millisecond delay might as well be an eternity at these velocities. Traditional robotic approaches, reliant on rigid programming or model-based planning, crumble under such variability. This is why Ace stands apart. It doesn’t follow scripted plays. It learns to thrive in the storm.

The Sim-to-Real Gap: The Real Heart of the Story

Here’s where the narrative deepens technically. Modern reinforcement learning agents can rack up millions of virtual hours in simulation, mastering policies through trial and error at scales impossible for humans. Yet the transfer to reality, known as the notorious sim to real gap, has long been robotics’ Achilles’ heel.

Simulations approximate physics but rarely capture every nuance: camera imperfections, variable lighting, ball deformation upon impact, motor backlash, vibrational resonances, or the subtle compliance in mechanical joints. A policy that looks flawless in a perfect digital twin often fails spectacularly when deployed, as tiny distributional shifts cascade into catastrophic errors.

Sony AI tackled this head-on. They built a highly accurate physics simulator seeded with real human gameplay data. Training occurred entirely in this environment using deep reinforcement learning. But success hinged on bridging the divide through careful domain randomization, noise modeling, and architectural innovations that made the learned policies robust to real-world discrepancies. This isn’t mere incremental progress. It’s a testament to how far sim-to-real techniques have matured, turning simulation from a convenient shortcut into a genuine launchpad for physical competence.

How Ace Bridges the Gap

Ace integrates three tightly coupled pillars: perception, control, and hardware. Its vision system deploys nine synchronized active pixel sensor (APS) cameras using Sony IMX273 sensors for 200 Hz 3D ball triangulation with just 3.0 mm error and 10.2 ms latency. Complementing these are three gaze control systems featuring event-based vision sensors (IMX636), pan-tilt mirrors, and tunable lenses that track spin at frequencies up to 700 Hz.

Event-based cameras shine here. Unlike traditional frame-based sensors that capture full images at fixed rates, they register only pixel-level brightness changes asynchronously. This yields microsecond temporal resolution for fast motion while slashing data bandwidth and latency.

On the control side, Ace employs model-free deep reinforcement learning with an asymmetric actor-critic architecture. Policies train in simulation where the critic enjoys privileged access to perfect state information, while the actor operates solely on noisy, realistic sensor histories-mirroring deployment conditions. A bank of specialized policies allows dynamic selection during rallies, enabling adaptive shot-making without hand-crafted rules.

The robotic platform itself features a custom eight-joint design capable of executing high-speed trajectories. End-to-end system latency clocks in at an astonishing 20.2 milliseconds -roughly an order of magnitude faster than elite human visual-motor loops.

In the Nature study, Ace defeated elite players in three out of five matches and lost its two matches against professional players. Sony AI later reported that improved versions of Ace defeated professional players in December 2025 and March 2026. Ace also consistently achieved more than a 75% return rate for shots with spin up to 450 rad/s, showing strong performance against high-spin play.

What happens when a ball hits the net? In table tennis, net contact creates unpredictable trajectories. For Ace, Sony AI’s physical AI research system, these rare events were one of the hardest real-world conditions to address. pic.twitter.com/5Ecv360hxY

— Sony AI (@SonyAI_global) April 23, 2026

Why This Matters Beyond Sport

Ace transcends ping-pong. It signals maturation of physical AI-systems capable of operating reliably in high-speed, uncertain environments shared with humans.

Consider advanced manufacturing, where robots must manipulate delicate, moving components on dynamic assembly lines without halting production for every anomaly. Emergency response robots could navigate debris-strewn disaster zones, making split-second decisions amid structural instability. Warehousing and logistics stand to gain safer human-robot collaboration, with machines that anticipate and react to unpredictable worker movements.

In medicine, precision robotics for surgery or rehabilitation could benefit from similar low-latency perception-action loops. Even sports training gains a powerful tool: tireless robotic opponents that simulate rare techniques or push athletes beyond human limits. Sony AI frames this explicitly as progress toward AI that functions safely and effectively in the dynamic physical realm.

The economic and societal implications ripple outward. Tasks once deemed too dexterous or reactive for automation edge closer to feasibility, potentially reshaping labor markets while opening avenues for human-AI partnership rather than pure replacement.

What This Does Not Mean

Tempering excitement with clarity remains essential. Ace does not possess human-like understanding of the sport-its “intelligence” emerges from statistical patterns and optimized control policies, not embodied cognition or emotional insight. It excels through superhuman consistency and reaction speed, not creative flair in the philosophical sense.

This breakthrough does not imply wholesale automation of all physical labor tomorrow. Significant challenges persist in generalization across diverse environments, long-horizon planning, and safe operation at scale without extensive engineering. Athletes retain their central role; sport’s enduring appeal lies in human drama, resilience, failure, and triumph-elements no machine can replicate.

Ace proves perception, decision-making, and actuation can integrate at expert levels in reality. Yet it also highlights how far we must travel before physical AI becomes ubiquitous and truly versatile. As the robot rallies against flesh-and-blood champions, spectators witness more than technical victory. They see a mirror held to human ingenuity-our drive to build machines that extend capabilities while reminding us of qualities that remain uniquely ours: the sweat, the roar of the crowd, the unpredictable spark of competition. In bridging digital prowess with physical grace, Ace doesn’t diminish humanity. It expands the playground where both can evolve.

The table tennis table, once a purely human domain, now hosts a new kind of dialogue. One where silicon and sinew push each other toward unprecedented heights. The game, as they say, has only just begun.