As AI agents move from prototypes to deployable products, framework choice is becoming one of the most important architectural decisions for builders. Here is a technical guide to the frameworks shaping that transition in 2026.

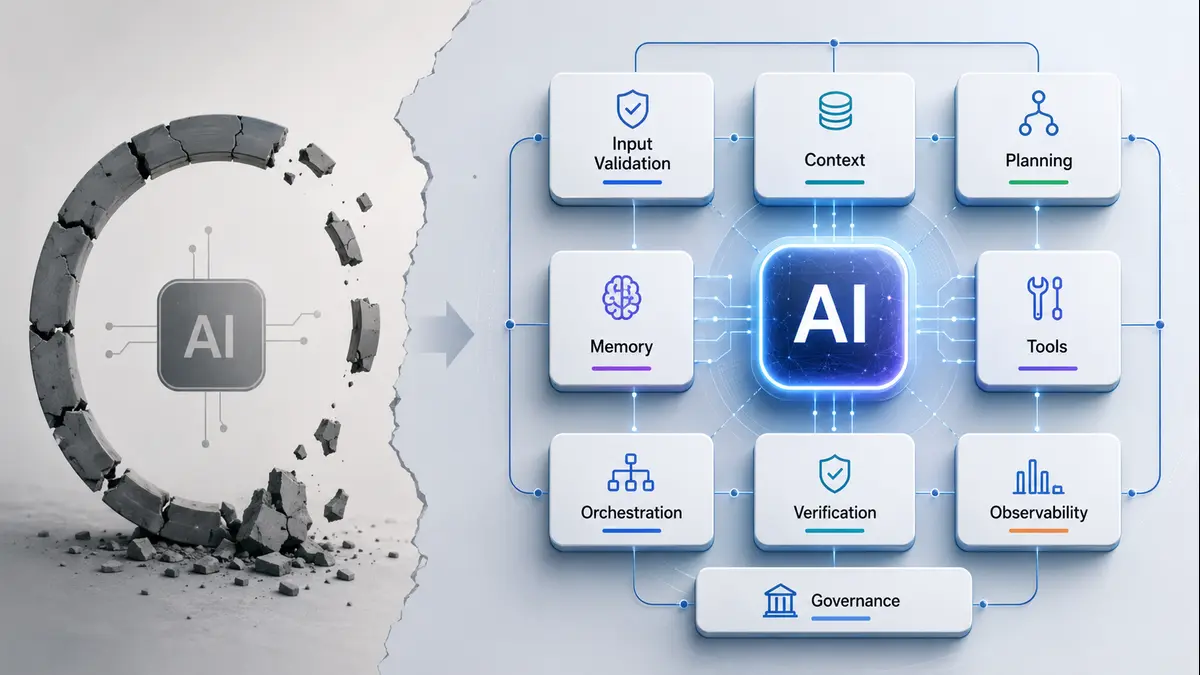

For much of the early generative AI wave, most software teams were occupied with a relatively simple challenge: connecting a large language model to a prompt, a few tools, and perhaps a document store. That phase produced thousands of demos, copilots, and question-answering bots. But the market has now moved meaningfully beyond that stage.

Across industries, AI systems are increasingly expected to do more than answer questions. They are expected to reason through tasks, call external tools, collaborate with other models, maintain memory, route decisions conditionally, and complete multi-step objectives with limited human intervention. In other words, they are expected to function as agents.

This transition has created an entirely new engineering question — one that many teams underestimate until much later in development:

What Exactly Is an AI Agent Framework?

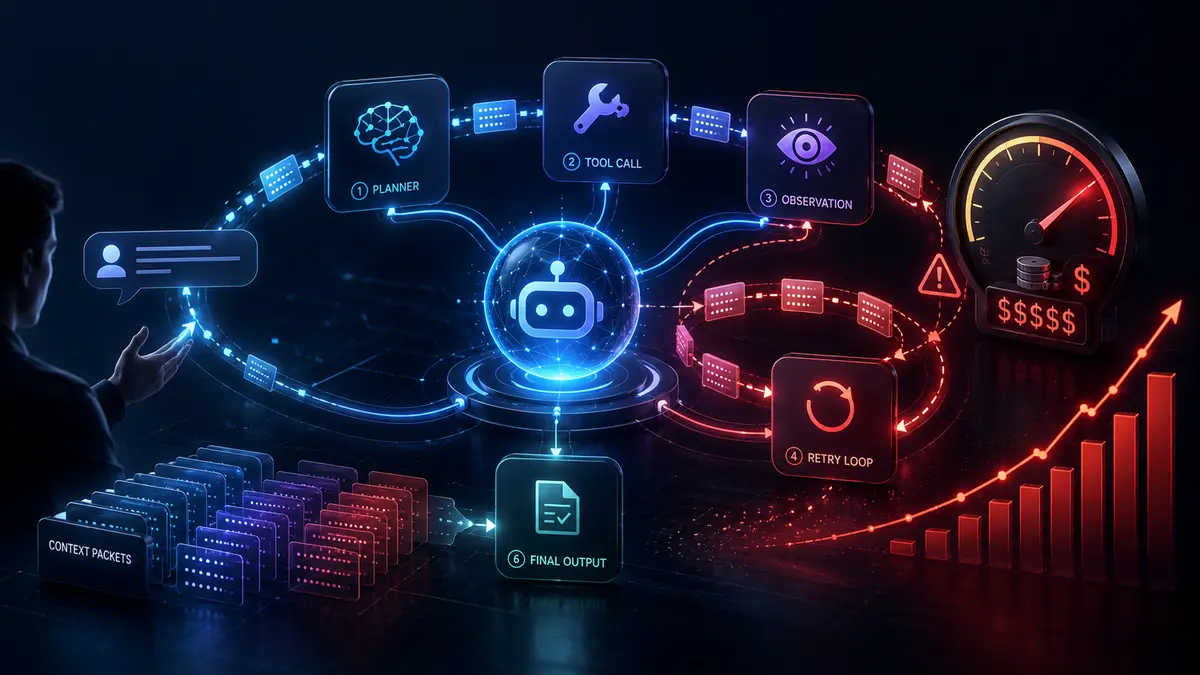

An AI agent framework is a software layer that allows a large language model to move beyond one-time generation and into iterative execution. Instead of responding once to a prompt, the model is given an environment in which it can:

- access tools,

- retrieve memory,

- inspect outputs,

- decide next actions,

- call APIs,

- hand tasks to other agents,

- and continue reasoning until a goal condition is satisfied.

The large language model acts as the reasoning engine, but the framework acts as the operational nervous system.This distinction matters because the same LLM can behave radically differently depending on the orchestration logic around it. A GPT-powered assistant wrapped in a linear tool-calling loop is not the same as a GPT-powered agent running on a persistent graph with conditional execution, fallback checkpoints, and human approval gates.

In practical terms, frameworks are what convert language intelligence into usable software behavior.As the broader agent economy matures, these frameworks are increasingly becoming the hidden infrastructure behind not just internal enterprise assistants, but standalone deployable AI products.

Which Orchestration Architecture Should the Agent Run On?

At first glance, this may appear to be a routine developer tooling decision. In reality, it is one of the most consequential architectural choices in any agentic deployment. The framework selected at the beginning quietly determines how state is persisted, how retries are managed, how workflows branch, how multiple agents communicate, how human approvals are inserted, and how observable or debuggable the full system remains once it moves into production.

An agent that works elegantly in a prototype can become brittle, opaque, and prohibitively expensive under real user traffic if the orchestration layer underneath is not chosen carefully.That is why the modern AI builder is no longer simply choosing an LLM.

The builder is choosing an orchestration philosophy.

The Five Architectural Families Defining the Agent Framework Market

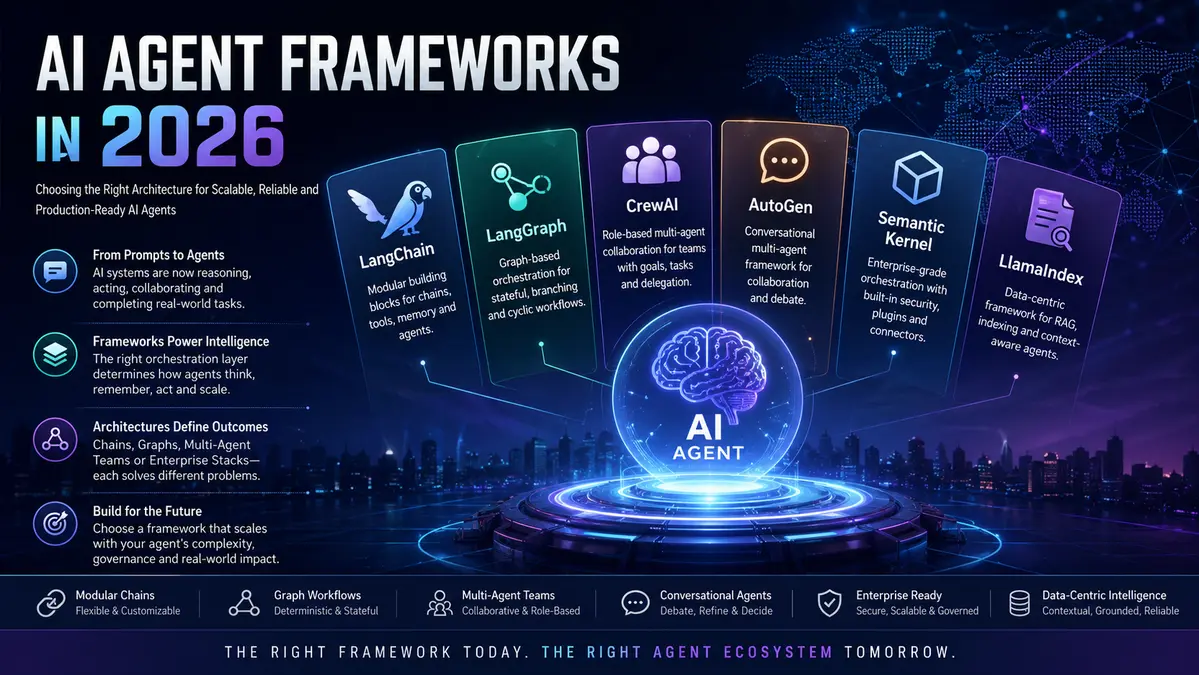

Although dozens of frameworks now exist, most of them can be understood through five dominant architectural categories.

1. Modular Chain-and-Tool Frameworks: Flexible but Hands-On

This was the first major generation of agent tooling.

Here, an agent is built by composing individual blocks:

- prompts,

- tool definitions,

- retrievers,

- memory layers,

- output parsers,

- callback handlers.

LangChain remains the most recognized example of this style. Its ecosystem offers deep integrations across vector databases, APIs, search systems, loaders, and memory components, making it highly attractive for developers who want granular control.

The strength of modular frameworks lies in freedom. Nearly every behavior can be customized.The weakness is that freedom often means orchestration burden. Developers must manually think through state transitions, failure modes, and execution consistency.

For retrieval-heavy systems, custom enterprise copilots, and deeply integrated workflows, this category still remains extremely useful. But as agents become longer-running and more autonomous, modular chains alone often begin to show strain.

2. Graph-Based Workflow Frameworks: The Rise of Deterministic Agent Engineering

As agent tasks became more complex, the industry gradually realized that simple sequential tool loops were not enough.

Agents needed:

- branching paths,

- recursive loops,

- retry logic,

- interruptibility,

- persistent state,

- and explicit transitions between reasoning stages.

This led to the rise of graph-native orchestration.

LangGraph has become one of the defining names in this category. Instead of treating execution as a chain, it models execution as a directed graph where each node performs a defined action and edges determine what happens next under varying conditions.

This seemingly technical shift has enormous production implications.

Graph frameworks make it easier to:

- checkpoint long-running sessions,

- recover from tool failures,

- pause for human review,

- branch into specialized sub-agents,

- and inspect exactly where an agent made a poor decision.

For teams building mission-critical business workflows, this level of determinism is becoming less of a luxury and more of a necessity.In many ways, graph frameworks represent the movement of AI agents from “clever demos” to “serious software systems.”

3. Role-Based Multi-Agent Frameworks: Organizational Logic Applied to Machines

A different school of thought emerged soon after.Rather than defining every execution node manually, why not define a team?This gave rise to role-based frameworks where agents are treated as specialized workers:

- a researcher,

- a writer,

- an analyst,

- a reviewer,

- a planner,

- a validator.

CrewAI popularized this pattern by allowing developers to assign roles, goals, and tasks in a highly intuitive declarative style.

The reason this framework category gained traction is simple: it mirrors human delegation.Business users can understand it. Non-specialist developers can prototype with it quickly. Internal teams can conceptualize workflows without needing to reason about every state machine transition.

This has made role-based systems highly attractive for:

- content pipelines,

- report generation,

- lead qualification,

- QA chains,

- market research workflows,

- and business back-office automations.

Importantly, these are precisely the kinds of agent systems that are increasingly being packaged as reusable deployable products rather than remaining internal experiments. That trend should not be ignored.

4. Conversational Multi-Agent Frameworks: Debate, Negotiation, and Iterative Reasoning

Not all intelligent workflows are deterministic.

Some tasks require agents to challenge each other, refine assumptions, debate alternatives, or iteratively improve outputs through dialogue.

This produced the conversational multi-agent family.

Microsoft AutoGen became one of the best-known implementations of this style, allowing multiple agents to communicate through structured message exchanges. Microsoft’s broader enterprise orchestration initiatives continue to build on this philosophy.

Conversational frameworks are especially effective where:

- code needs iterative refinement,

- one agent must critique another,

- multiple hypotheses must be evaluated,

- or a human collaborator must remain in the loop.

These systems often feel less like workflow engines and more like supervised digital teams.

Their outputs can be impressively rich — though they also require careful token discipline and guardrails to avoid runaway conversational loops.

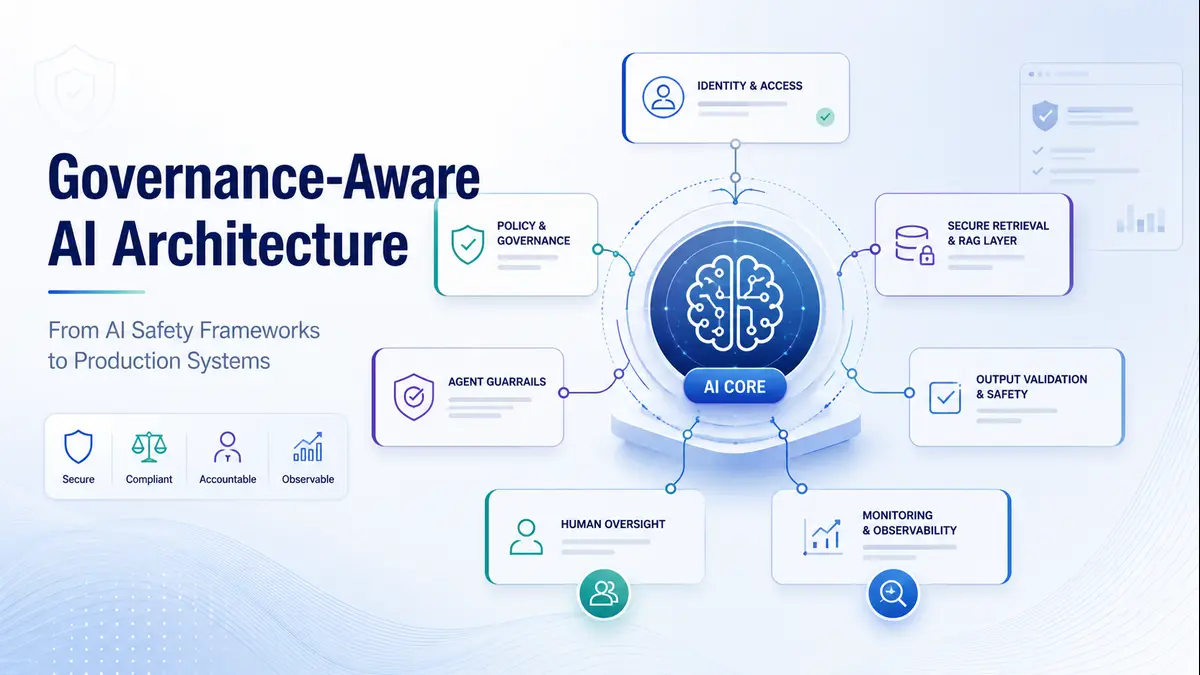

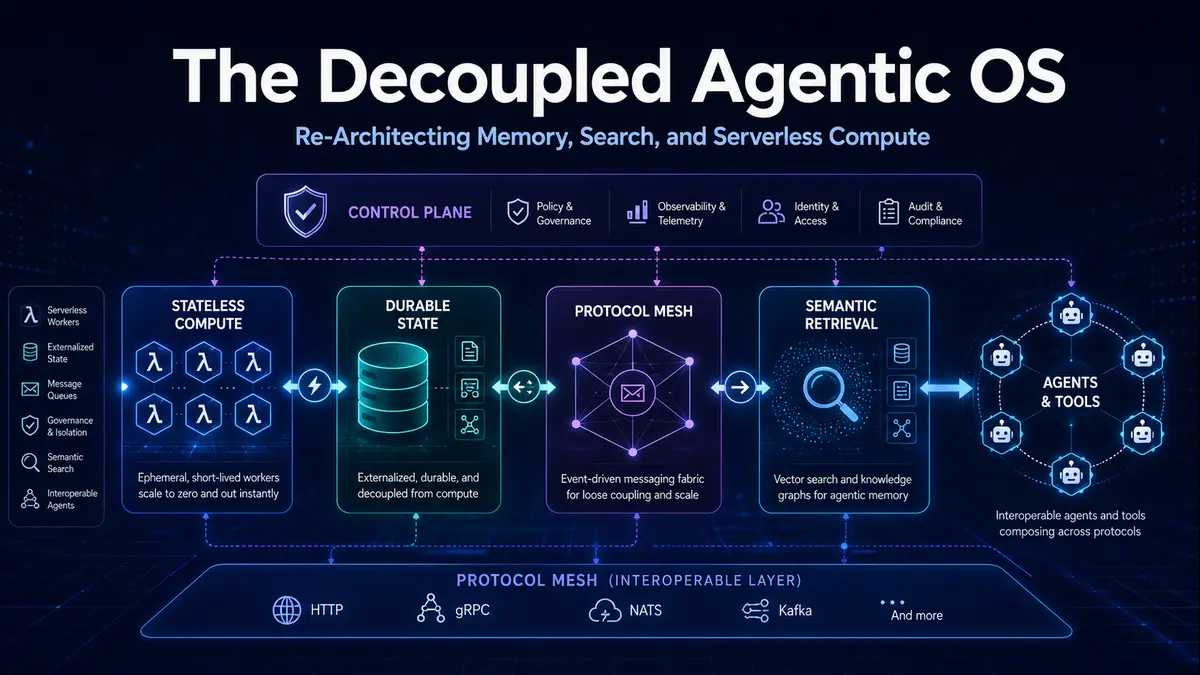

5. Enterprise and Platform-Native Frameworks: Governance Before Glamour

As the agent market matured, enterprises entered with a different set of priorities:

- security,

- auditability,

- cloud compliance,

- identity controls,

- observability,

- governed data access.

That demand created a class of platform-native frameworks such as:

- Semantic Kernel,

- LlamaIndex,

- OpenAI’s Agents SDK,

- Google ADK.

These frameworks are often less flashy in public discourse, but highly relevant in real deployments because they integrate deeply with cloud environments, document systems, enterprise permissions, and monitoring layers.

In highly regulated industries, framework choice is often dictated less by developer preference and more by governance architecture. This is where the market is becoming particularly interesting:

AI agents are no longer being viewed only as internal technical artifacts. They are increasingly being viewed as governed software assets.

The Mistake Most Builders Make When Choosing a Framework

Many teams still choose a framework the same way they choose a JavaScript library: whichever looks easiest, whichever trends on social media, or whichever produces the quickest first demo.That is usually a mistake.Because in agent systems, the framework is not merely a coding convenience.

It defines:

- how expensive memory becomes,

- how hard observability becomes,

- how maintainable retries become,

- how reusable the agent becomes,

- and crucially, how portable the agent remains when moved across deployment environments.

An agent built for one-off internal use is one thing.

An agent designed to be deployed repeatedly, shared externally, integrated by customers, or distributed as a product requires a much more durable orchestration backbone.This distinction is beginning to shape a quiet but important shift in the market,

Developers are no longer asking only, “Can I build an agent?”

They are increasingly asking:

“Can this agent operate as a reusable product, integrate cleanly into external workflows, and deliver repeatable value beyond my own internal use?”

The Emergence of the Agent Economy

As frameworks become easier, deployment infra becomes cheaper, and APIs become more standardized, one clear reality is emerging:

many AI agents being built today are not destined to remain isolated internal scripts.

They are becoming:

- standalone business tools,

- vertical research assistants,

- domain-specific workflow engines,

- operational copilots,

- and purchasable automation services.

In other words, the market is moving from agent creation toward agent distribution.

This matters because the next layer of competition will not simply be who can code an agent.

It will be who can:

- package it cleanly,

- expose it safely,

- allow users to discover it,

- and create repeatable usage around it.

For independent builders and smaller AI teams, that creates a new strategic possibility. A well-designed agent is no longer just a private engineering artifact. It can increasingly become a monetizable digital product in its own right.

The underlying framework therefore becomes the first infrastructural decision in a much larger lifecycle.

Choose with Long-Term Intent, Not Prototype Excitement

There is no universally superior framework.

-LangGraph may be ideal for stateful enterprise logic.

-CrewAI may dramatically accelerate business workflow prototyping.

-LlamaIndex may dominate document-grounded systems.

-Conversational Microsoft stacks may better suit engineering collaboration.

But the smartest teams are no longer choosing based only on present coding convenience.

They are choosing based on the full future lifecycle of the agent:

prototype, deploy, observe, scale, distribute, and potentially commercialize.

That is the more useful lens. Because in 2026, AI agents are no longer just pieces of experimental software. They are steadily becoming products, services, and digital workers with their own market presence. The frameworks underneath them are where that future quietly begins.

As more developers begin turning specialized agents into reusable digital products, discoverability and external distribution will matter just as much as orchestration. Poniak Labs is being built with that exact future in mind.

Explore the emerging AI agent marketplace at Poniak Labs.