Long-context models are reshaping AI system design. This article explores when RAG still matters, where it falls short, and what a hybrid future looks like.

For the past year or so, Retrieval-Augmented Generation(RAG) – has quietly become the default architecture behind most serious AI systems. Enterprise copilots, internal knowledge tools, research agents—they all tend to follow the same pattern: don’t rely on the model alone, retrieve the right information at runtime and ground the response.

For a while, that approach wasn’t just useful—it was necessary.

But things are starting to shift. Not in a dramatic “everything is broken” way, but in a more subtle, structural sense. As long-context models improve, a different question starts to emerge: if a model can read much more in a single pass, how much of the retrieval layer is still essential?

This isn’t about replacing one system with another overnight. Vector databases aren’t going away, and RAG is far from obsolete. But the role they play is changing. What used to be a hard dependency is now becoming more situational.

So the real question is no longer “RAG or not.” It’s: where does retrieval genuinely add value – and where is it compensating for limitations that are starting to disappear?

Why RAG Became the Default

To understand this shift, it helps to go back to the constraints that shaped the first generation of AI systems.

Earlier large language models had strict context limits—typically a few thousand tokens. That meant you simply couldn’t pass entire documents into the model. If the model didn’t already know something, it either guessed or hallucinated.

RAG solved this in a clean, modular way.

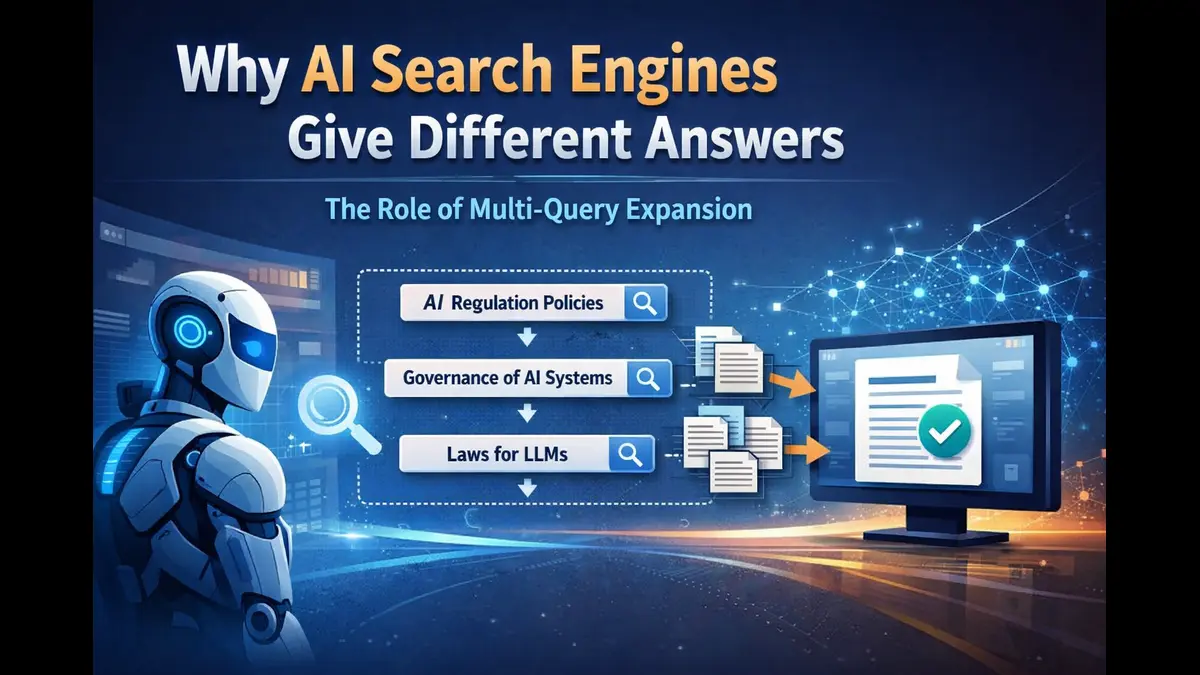

Instead of embedding knowledge inside model weights through fine-tuning, you externalize it:

- Documents are ingested and chunked

- Each chunk is converted into an embedding vector

- These vectors are stored in a database (FAISS, HNSW indexes, Pinecone, etc.)

- At query time, you embed the query, run similarity search (usually cosine similarity), retrieve top-k chunks

- Optionally rerank them using cross-encoders or LLM-based scoring

- Feed the final context into the model

This pipeline decoupled knowledge from the model and made systems dynamic. Updates didn’t require retraining. Costs were manageable. And grounding improved significantly.

RAG wasn’t chosen because it was elegant – it was chosen because it operates well under tight constraints.

The Friction Inside RAG Pipelines

But once you start building these systems at scale, the trade-offs become visible.

The first issue is context fragmentation. Most chunking strategies rely on fixed token windows – say 512 or 1024 tokens with overlap. But real-world information doesn’t follow those boundaries. A single argument or dependency chain may span multiple chunks. Once broken, the model never sees the full structure again.

Overlap helps, but it’s a partial fix. Eventually this needs increasing storage and compute without fully restoring coherence.

The second issue is retrieval accuracy. Embedding similarity (cosine or dot product) is a proxy for relevance, not a guarantee. In dense domains, especially finance or legal text, you often retrieve “nearby” content that shares vocabulary but doesn’t answer the actual question.

This is why many systems add reranking layers:

- Cross-encoders (BERT-style models scoring query–document pairs)

- LLM-as-a-judge approaches

These improve precision, but they also introduce latency and cost. Now the pipeline isn’t just retrieval – it’s a multi-stage inference.

The third issue is system complexity. A production-grade RAG system usually involves:

- Ingestion pipelines (batch + streaming)

- Embedding versioning

- Vector index tuning (HNSW parameters, ef_search, ef_construction)

- Hybrid search (BM25 + dense retrieval)

- Metadata filtering

- Caching layers (Redis, semantic caching)

- Monitoring for index freshness and drift

What starts as “just retrieval” turns into a distributed system.

And finally, there’s cost. Embeddings, storage, queries, reranking – all of it adds up. At scale, retrieval isn’t just a design choice – it’s an operational expense.

For a long time, all of this was justified. Because the only alternative – feeding everything into the model – wasn’t viable.

What Long Context Models Change

Long-context models change that equation.

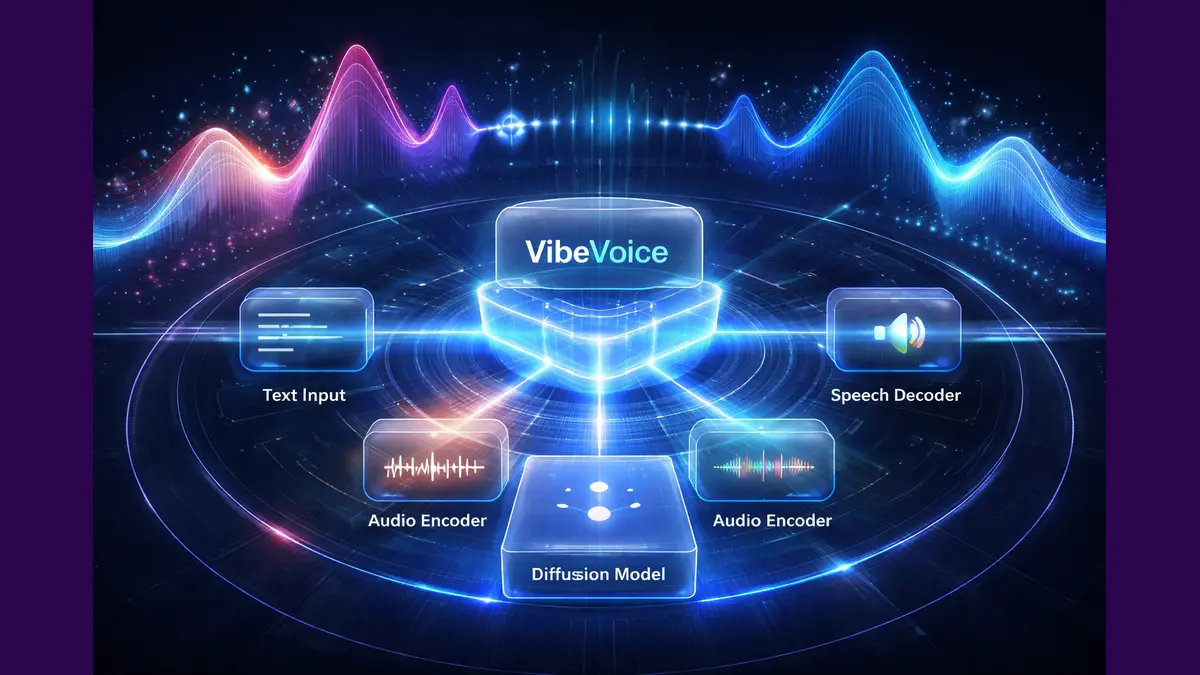

Modern architectures—using techniques like:

- Rotary positional Embeddings (RoPE)

- Grouped-query Attention (GQA)

- FlashAttention Optimizations

These techniques allow models to process significantly larger sequences than before.

Practically, this means:

- Entire documents can be passed in without chunking

- Multi-document reasoning becomes more feasible

- Relationships across distant sections can be preserved

Instead of asking a retrieval system to guess which fragments matter, you can let the model see more of the original structure.

Let us take a financial example. If you’re analysing multiple annual reports, the relevant signals are distributed:

- Management commentary

- Risk disclosures

- Segment-level performance

- Footnotes and appendices

In a chunked RAG setup, you might retrieve pieces of each – but rarely all of them in the right combination. With long context, you can pass full reports and let the model reason across them more naturally.

Same applies to contracts, research papers, or large codebases. These are not independent snippets—they are structured systems.

Long context preserves the inherent structure of the document.

The Limits of Long Context

However with all its advantages, long context is not without its cons.

As sequence length increases, attention dilution becomes a real issue. Transformers distribute attention across all tokens, and even with optimisations, signal-to-noise ratio drops as context grows.

This leads to known effects:

- “Lost in the middle” — reduced attention to mid-sequence content

- Difficulty with multi-hop reasoning across distant tokens

- Reduced reliability when multiple relevant facts must be combined

Benchmarks like “needle in a haystack” highlight this gap. Models can often find a single fact in long input, but struggle when reasoning requires connecting multiple such facts.

So while long context improves access, it doesn’t guarantee focus.

And that distinction matters when designing systems.

Where Vector Databases Still Win

There are clear scenarios where retrieval remains essential.

If your dataset is:

- Massive (millions or billions of documents)

- Frequently updated

- Latency-sensitive

In those scenarios we cannot rely on full-context ingestion. It’s too expensive and too slow.

Vector search also excels when the problem is inherently selective – finding a few relevant items from a large pool.

In these cases, retrieval is not just useful – it’s fundamental requirement.

Where Long Context Has the Advantage

On the other hand, when the dataset is bounded and the task requires synthesis, long context becomes more effective.

Examples:

- Comparing multiple financial reports

- Reviewing contract bundles

- Analyzing research papers

- Understanding medium-sized codebases

Here, the cost of fragmentation outweighs the benefits of retrieval.

Long context allows the model to:

- Preserve discourse flow

- Cross-reference distant sections

- Maintain logical continuity

It doesn’t eliminate retrieval – it reduces the need for aggressive chunking.

The Hybrid Direction

The most practical architecture going forward is hybrid.

In this setup:

- Vector databases act as a memory layer

- They retrieve relevant documents or sections at a higher level

- Long-context models consume these documents and perform reasoning

So instead of:

retrieve chunks → generate

You move toward:

retrieve documents → reason holistically

This separation is cleaner:

- Retrieval handles scale and recall

- The model handles synthesis and reasoning

Vector databases don’t disappear. They move up the stack.

What This Means for Builders

For engineers and founders, this shift simplifies early decisions.

You don’t always need a full RAG pipeline on day one. For smaller datasets or bounded problems, you can:

- Feed more context directly

- Avoid premature chunking

- Focus on evaluation and reasoning quality

As systems scale, you can introduce retrieval where it actually adds value.

So the emphasis shifts from:

“How do we retrieve better?”

to:

“How do we help the model think better?”

And that’s a more meaningful problem to solve.