OpenAI’s planned GPT-5.5-Cyber rollout and Anthropic’s Claude Mythos Preview point to a new AI trend: powerful models for cybersecurity and other high-risk domains may not be open to everyone. This shift could improve digital defense, but it also raises serious questions around surveillance, competition, government influence, and private control over frontier AI.

For most of the generative AI era, the industry followed a simple pattern: build a more powerful model, release it through a product or API, and let users discover what it can do.

That pattern is now beginning to change.

The latest signal comes from cybersecurity. OpenAI is preparing a restricted rollout of GPT-5.5-Cyber to selected cybersecurity defenders, while Anthropic has already introduced Claude Mythos Preview through its restricted Project Glasswing program. These models are not being released like everyday chatbots. They are being placed behind gates, offered to selected partners, and positioned for high-stakes defensive use cases.

The reason is simple: Cybersecurity AI can defend systems — or help attack them.

The same model that can help a defender find a vulnerability in critical infrastructure may also help an attacker exploit it. The same system that can assist penetration testing may also lower the skill barrier for offensive operations. The same AI that can secure a bank, hospital, or software supply chain may also become a tool for those trying to compromise them.

This is why GPT-5.5-Cyber and Claude Mythos are not just model announcements. They point to a larger trend: the rise of restricted-access AI.

The next phase of artificial intelligence may not be defined only by bigger benchmarks or more general intelligence. It may be defined by access. Who gets the most capable models? Who is denied? Who decides? And what happens when powerful AI becomes available first to governments, large enterprises, and selected security vendors — but not to the general public?

Cybersecurity Is Becoming the First Test Case

Cybersecurity is the natural place for this shift to begin.

Unlike many consumer AI use cases, cybersecurity has immediate real-world consequences. A strong AI model can help defenders analyze code, reverse-engineer malware, identify weak points, simulate attacks, and prioritize patches. Used responsibly, that can strengthen the digital infrastructure that modern society depends on.

But those same capabilities can be dangerous in the wrong hands.

Anthropic’s Claude Mythos Preview shows how serious this has become. Anthropic describes Mythos as a general-purpose model that performs strongly across many tasks but is especially capable at computer security work. The company launched Project Glasswing to give selected partners access to the model for finding and fixing vulnerabilities in critical software systems.

OpenAI is moving in a similar direction. Sam Altman said OpenAI is starting the rollout of GPT-5.5-Cyber, a frontier cybersecurity model, to critical cyber defenders. OpenAI’s broader Trusted Access for Cyber program already gives vetted defenders access to more cyber-capable models, including GPT-5.4-Cyber, through tiered access and authentication.

This is important for how the article should be framed. GPT-5.5-Cyber should not be described as a fully public launch. It is better understood as a restricted or starting rollout for selected defenders.

That distinction matters.

It shows that the leading AI labs are converging on the same conclusion: the most capable cyber models may be too sensitive for broad public release.

From Open Access to Trusted Access

The early story of AI felt democratic. Developers could access powerful models through APIs. Startups could build products quickly. Independent researchers could experiment. Students could learn. Small companies could compete with larger firms by using the same underlying intelligence layer.

That openness helped accelerate innovation.

But as AI models become more capable in high-risk domains, open access becomes more complicated. Cybersecurity is only the beginning. Similar questions will likely emerge in biology, financial systems, military planning, surveillance analytics, autonomous agents, and infrastructure management.

OpenAI’s Trusted Access for Cyber program reflects this shift directly. The company describes the program as a way for vetted enterprise customers and cybersecurity practitioners to use powerful models for dual-use cybersecurity work, while recognizing that malicious actors may try to exploit the same tools to increase the scale and sophistication of attacks.

That phrase – dual-use – is the heart of the debate.

A dual-use technology can be used for both protection and harm. A vulnerability scanner can help secure a hospital, but it can also help attack one. A malware analysis tool can help a defender understand a threat, but it can also teach an attacker how to improve. A model that automates penetration testing can improve corporate security, but it can also make cybercrime easier for less-skilled actors.

This is why restricted access may become the default for the most sensitive vertical AI systems.

The Case for Restricted AI

There is a strong argument in favor of restricted access.

First, cyber defenders need better tools. Attackers only need to find one weak point. Defenders must protect every critical system, every employee account, every cloud service, every endpoint, and every software dependency. That imbalance has always made cybersecurity difficult.

AI could help reduce that gap.

A specialized cyber model can assist security teams by finding vulnerabilities faster, explaining risks, generating remediation steps, testing code, and prioritizing threats. For under-resourced teams, that could be extremely valuable.

Second, critical infrastructure needs protection before a crisis occurs. Banks, hospitals, telecom networks, logistics systems, energy grids, and government platforms are not ordinary software assets. They are part of the operating system of modern society. If powerful AI can help secure them, controlled access may be justified.

Third, trusted access creates accountability. A public model can be used anonymously or at massive scale. A restricted program can require identity verification, organizational approval, logging, monitoring, and revocation. These controls are imperfect, but they create friction against misuse.

Fourth, defenders cannot be left behind. If malicious actors eventually obtain similar AI capabilities through leaks, open models, stolen systems, or independent development, then responsible defenders need advanced tools too. Waiting too long could make the defensive side weaker.

This is the best argument for OpenAI’s and Anthropic’s approach. They are not simply hiding capability. They are trying to place the strongest tools first in the hands of people who are supposed to protect critical systems.

The Risks: Surveillance and Power Concentration

But restricted AI also creates serious risks.

The first risk is government surveillance.

Cybersecurity and national security are closely connected. Governments have a legitimate interest in protecting infrastructure from hostile states, criminal groups, and cyberattacks. But once powerful models are distributed through trusted programs, governments may become central gatekeepers.

That raises uncomfortable questions.

Could similar models be used for surveillance? Could they be used to monitor citizens, journalists, employees, activists, or political opponents? Could tools originally justified for infrastructure defense expand into intelligence gathering or social control?

This is not paranoia. It is a governance question.

Technology built for protection can easily become technology built for observation. The same AI that detects abnormal network activity can be adapted to detect abnormal human behavior. The same access-control logic used in cyber defense can be extended into policing, border control, financial monitoring, and public-sector analytics.

That does not mean government involvement is always bad. Public institutions have a role in national cyber defense. But powerful AI access cannot be governed only through private arrangements and closed-door partnerships. There must be transparency, auditability, and democratic oversight.

The second risk is corporate concentration.

If only the largest companies, security vendors, banks, cloud providers, and government contractors get access to the strongest models, they gain an enormous advantage. Smaller firms, open-source maintainers, universities, independent security researchers, and companies in developing markets may be left with weaker tools.

That could widen the security gap.

Large enterprises may become better protected, while smaller organizations remain exposed. This is especially dangerous because much of the world’s software infrastructure depends on open-source projects maintained by small teams. If frontier cyber AI is available mainly to wealthy institutions, the parts of the internet with the least funding may remain the most vulnerable.

Anthropic’s Project Glasswing does include a focus on securing critical software and supporting open-source security efforts, which is a positive step. But the broader problem remains: restricted access can easily become privileged access.

The third risk is private governance.

When AI labs decide who can use powerful models, they begin to act like regulators. They decide which companies are trusted. They decide which researchers qualify. They decide which use cases are acceptable. But these labs are private companies, not elected institutions.

That creates a new kind of power.

OpenAI, Anthropic, Google, Meta, and other AI companies may increasingly shape who can access the most advanced capabilities in society. Their decisions will influence security, research, business competition, and even state capacity.

This does not mean these companies are acting irresponsibly. In many cases, restricted release may be the safer choice. But society should be honest about what is happening: private AI labs are becoming gatekeepers of strategic capability.

The Government Angle Will Only Grow

Government involvement in this trend is unavoidable.

Cybersecurity is national security. A cyberattack on a hospital, airport, bank, satellite network, or energy grid is not just a technical incident. It can become a public safety issue. As AI models become more capable in cyber operations, governments will naturally want visibility into how they are deployed.

Recent reporting has already shown government concern around Anthropic’s Mythos access plans. Reuters reported that unauthorized users had accessed Anthropic’s Mythos model through what was believed to be a third-party vendor environment, although the reported unauthorized use was not for cybersecurity purposes.

That incident highlights a hard truth: restricted models themselves become high-value targets.

If a model is powerful enough to be gated, it is also powerful enough to be attacked, copied, leaked, or misused through compromised access. So the governance challenge is not only about who should receive access. It is also about how access is secured after approval.

This is where governments, labs, security companies, and civil society will need clearer rules.

The future may require formal access tiers, independent audits, abuse reporting, usage transparency, model capability evaluations, and stronger protections for researchers who report misuse. Without these mechanisms, “trusted access” can become a vague label instead of a reliable governance model.

The Larger Trend: Vertical AI Behind Gates

The bigger story is not only cybersecurity.

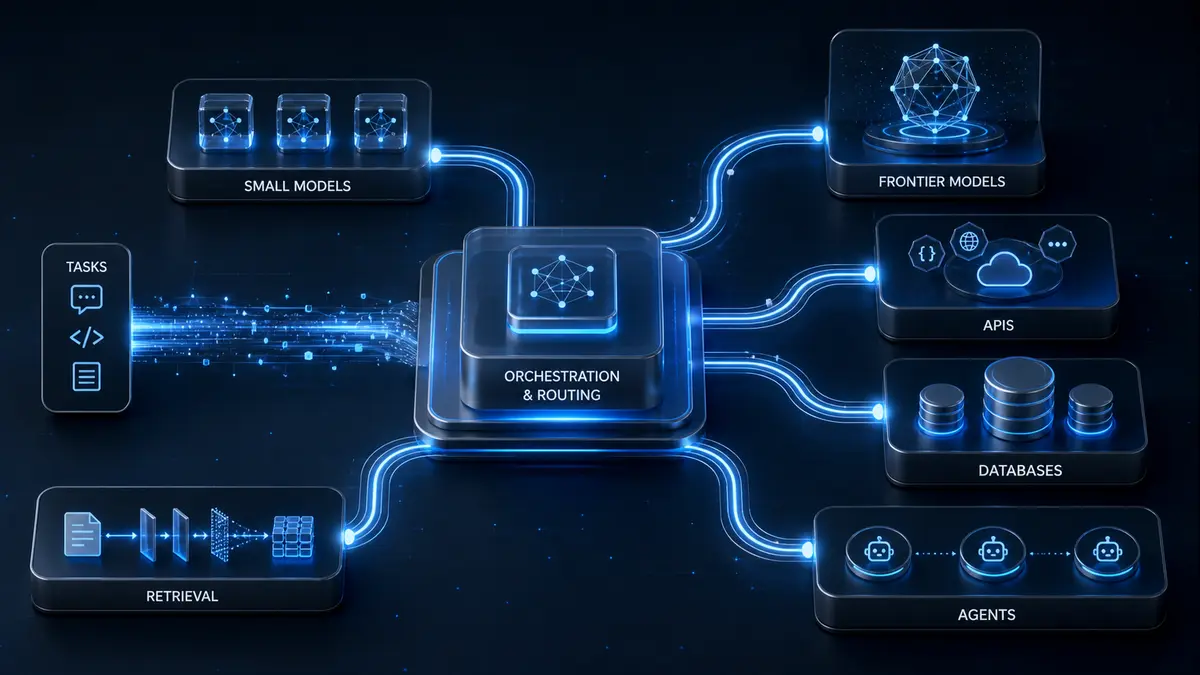

GPT-5.5-Cyber and Claude Mythos may be early examples of a broader shift toward vertical AI models that are powerful but not universally available.

In healthcare, models that can accelerate drug discovery may also raise biosecurity concerns. In finance, models that can detect fraud may also support market manipulation. In defense, models that can improve logistics may also support targeting systems. In law enforcement, models that can detect threats may also enable mass surveillance.

As AI becomes more specialized, the access question becomes more important.

The old question was: “How intelligent is the model?”

The new question may be: “Who is allowed to use that intelligence?”

That is a profound shift.

For startups and independent builders, this trend creates both opportunity and risk. The opportunity is that vertical AI will become one of the most important markets of the next decade. Models built for cybersecurity, finance, law, medicine, manufacturing, and public infrastructure may outperform general-purpose systems in real workflows.

But the risk is that access may become concentrated. If only large institutions can use the strongest vertical models, smaller companies may be forced to build on weaker public tools. That could make the AI economy less open, less competitive, and more dependent on a few dominant labs.

Useful AI May Not Always Be Public AI

GPT-5.5-Cyber and Claude Mythos reveal a future that is both practical and uncomfortable.

On one side, restricted AI makes sense. Powerful cyber models can help defenders protect critical systems. They can reduce response times, improve vulnerability discovery, and strengthen infrastructure before attackers strike. In a world of rising cyber threats, giving advanced tools to trusted defenders is not just reasonable; it may be necessary.

On the other side, restricted AI raises hard questions. It can strengthen government surveillance. It can give large companies undue advantage. It can leave smaller organizations behind. It can turn private AI labs into gatekeepers of strategic capability.

The future of AI will not be simply open or closed.

It will be governed.

The real challenge is to design access systems that protect society without concentrating too much power. That means trusted access must come with transparency. Government involvement must come with oversight. Corporate access must not exclude open-source security or smaller defenders. And powerful AI labs must explain not only what their models can do, but who gets to use them and why.

The next generation of AI may not be available to everyone.

That may be necessary.

But it must not become an excuse to build a future where only governments and the largest corporations have access to the strongest intelligence systems.

Because in cybersecurity, as in society, safety without accountability can quietly become control.