Anthropic’s partnership with SpaceX is not just about higher Claude Code limits. It signals a deeper shift in artificial intelligence: the next phase of competition may be decided not only by better models, but by who controls compute, energy, data centers, and infrastructure at scale.

The AI Race Is Moving From Models to Machines

For the last few years, the artificial intelligence industry has been judged mainly by model capability. Every major release was compared through benchmarks, reasoning scores, coding ability, context length, multimodal performance, and enterprise readiness. The public conversation was simple: which model is smarter?

That question still matters. But it is no longer enough.

Anthropic’s new compute partnership with SpaceX shows that the next phase of AI competition is moving beneath the surface. The real battle is no longer only about model architecture. It is about power supply, GPU access, data center capacity, networking, cooling, inference reliability, and the ability to serve millions of users without constantly throttling demand.

Anthropic’s partnership with SpaceX is meant to substantially increase its compute capacity. The immediate user-facing result is straightforward: higher usage limits for Claude Code and the Claude API. But the strategic meaning is much larger. Anthropic is not simply improving a product feature. It is strengthening its position in the infrastructure race towards Frontier AI.

This matters because artificial intelligence is no longer a laboratory experiment. It is becoming an operating layer for software development, finance, research, legal work, enterprise automation, and agentic workflows. When AI tools move from occasional chat interfaces to always-on work systems, capacity becomes destiny.

What Anthropic Actually Announced

Anthropic’s announcement had two parts.

First, it increased usage limits. Claude Code’s five-hour rate limits are being doubled for Pro, Max, Team, and seat-based Enterprise plans. The company is also removing peak-hour limit reductions for Claude Code on Pro and Max accounts. For developers, this is not a small quality-of-life change. It directly affects how long they can work with Claude Code before hitting restrictions during serious coding sessions. Anthropic also said it is raising Claude Opus API rate limits considerably.

Second, Anthropic confirmed a new compute agreement with SpaceX. The company said it had signed an agreement to use all of the compute capacity at SpaceX’s Colossus 1 data center. According to Anthropic, that gives it access to more than 300 megawatts of new capacity and over 220,000 NVIDIA GPUs within the month.

These numbers matter. AI capacity is not an abstract concept. Every prompt, code generation request, autonomous workflow, retrieval call, and reasoning loop consumes compute. A coding agent like Claude Code does not merely generate a few lines of text. It reads files, reasons through context, proposes edits, executes multi-step workflows, and often interacts repeatedly with the developer’s project structure. That makes it more compute-intensive than a basic chatbot session.

In traditional software, scaling means adding servers and optimizing cloud architecture. In frontier AI, scaling means securing specialized accelerators, stable power contracts, high-speed interconnects, data center sites, cooling systems, and massive capital commitments. The old software playbook still matters, but AI has brought hardware back to the center of strategy.

Why Claude Code Is Central to This Story

Claude Code is important because it represents where enterprise AI is heading. It is not just another chatbot for asking questions. It is an agentic coding tool that can understand a codebase, edit files, run commands, and integrate with development workflows. Anthropic’s documentation describes it as a tool available across terminal, IDE, desktop app, and browser environments.

That distinction is critical. A chatbot gives suggestions. A coding agent participates in execution.

For developers, the difference is practical. A chatbot may explain why a bug exists. A coding agent can inspect the repository, suggest a fix, edit the relevant files, run tests, and continue the loop. This makes the tool more useful, but also more demanding. Longer sessions, deeper context windows, more file operations, and repeated reasoning cycles all increase compute pressure.

That is why higher usage limits matter. They are not just marketing. They are part of the user experience.

If a developer is rebuilding a backend service, refactoring a React component library, writing database migrations, or reviewing a production bug, the agent must stay available long enough to complete the workflow. A tool that stops halfway through a complex task loses trust. In software engineering, interruption is expensive. Momentum is not a soft metric; it is productivity.

Anthropic appears to understand this. By tying the SpaceX compute deal directly to Claude Code limits, the company is showing that infrastructure decisions now translate directly into product experience.

Compute Is Becoming the New Moat

For years, the AI moat was described through data, talent, research depth, and model quality. Those still matter. But compute is becoming an equally important moat.

The reason is simple. If two labs have strong models, the one with more reliable compute can serve more users, support longer sessions, reduce rate limits, offer better latency, and absorb enterprise demand. That company can also experiment faster internally. Training, fine-tuning, inference optimization, safety evaluations, and agent testing all become easier when capacity is available.

Anthropic’s latest deal also sits inside a much larger compute expansion strategy. In the same announcement, the company referenced several other major infrastructure commitments, including an agreement with Amazon of up to 5 gigawatts, a 5 gigawatt agreement with Google and Broadcom beginning in 2027, a Microsoft and NVIDIA partnership involving $30 billion of Azure capacity, and a $50 billion American AI infrastructure investment with Fluidstack.

That list tells the real story. Anthropic is not betting on one cloud provider or one hardware source. It is building a diversified compute stack across AWS Trainium, Google TPUs, NVIDIA GPUs, and multiple infrastructure partners.

This is a serious strategic move. In classical business terms, Anthropic is reducing supplier concentration risk. In AI terms, it is making sure that model growth, user demand, and enterprise adoption are not trapped by a single bottleneck.

The older technology industry was built on software eating the world. The new AI industry may be built on software eating power grids.

Why SpaceX Is an Unusual but Logical Partner

At first glance, Anthropic and SpaceX look like an unlikely pairing. Anthropic is known for its safety-focused positioning and enterprise AI products. SpaceX is known for rockets, satellites, Starlink, and massive engineering execution. But when viewed through the lens of infrastructure, the partnership makes more sense.

AI data centers require extreme execution discipline. They demand large-scale power planning, hardware deployment, logistics, facilities management, networking, and continuous operations. SpaceX has spent years building complex physical systems under difficult constraints. That culture is not the same as a traditional cloud provider, but it is relevant to the new AI infrastructure era.

The Colossus 1 facility is especially notable because of its scale. xAI’s announcement says Colossus 1 includes over 220,000 NVIDIA GPUs, including H100, H200, and GB200 accelerators. These are the kinds of chips used for frontier-scale training, fine-tuning, inference, multimodal systems, scientific workloads, and high-performance computing.

This means Anthropic is not merely renting ordinary server capacity. It is gaining access to a large pool of specialized AI compute that can support demanding model workloads.

The deal also carries a futuristic angle. Anthropic said it has expressed interest in partnering with SpaceX to develop multiple gigawatts of orbital AI compute capacity. xAI’s announcement frames space-based compute as a possible answer to terrestrial limits around power, land, and cooling.

That idea is still ambitious and technically difficult. Orbital compute is not something the industry can treat as a near-term replacement for Earth-based data centers. But the fact that serious companies are discussing it shows how intense the infrastructure pressure has become. When AI labs begin talking about compute in orbit, it means the ground is already getting crowded.

What This Means for OpenAI, Google, and the Rest of the Market

Anthropic’s move should not be read as a simple victory lap against OpenAI or Google. That would be too shallow. The bigger point is that all frontier AI labs are now being forced to think like infrastructure companies.

OpenAI has deep ties with Microsoft Azure. Google has its own TPU ecosystem and cloud infrastructure. Meta has invested heavily in its own AI infrastructure. Amazon is building around Trainium and cloud capacity. NVIDIA remains the most important hardware supplier in the AI boom. Anthropic’s SpaceX deal fits into this wider pattern.

The competitive question is changing.

It is no longer only: whose model performs better on benchmarks?

It is also: whose system can serve users reliably at scale?

That question matters because real-world adoption is not determined by benchmarks alone. A slightly better model with tight limits can lose developer mindshare to a slightly weaker model that is always available, fast, and deeply integrated into workflows. In enterprise environments, reliability often beats novelty. This has always been true in technology. Mainframes, databases, ERP systems, and cloud platforms all followed the same rule. Businesses eventually choose tools that can be trusted in production.

For AI coding tools, this is even more important. Developers may tolerate occasional limits while experimenting. They will not tolerate them when deadlines, production bugs, and enterprise deployments are involved. A coding assistant that cannot stay available during serious work becomes a toy. A coding agent that can operate reliably becomes infrastructure.

The Agentic AI Angle

The SpaceX deal also connects to a broader shift toward agentic AI.

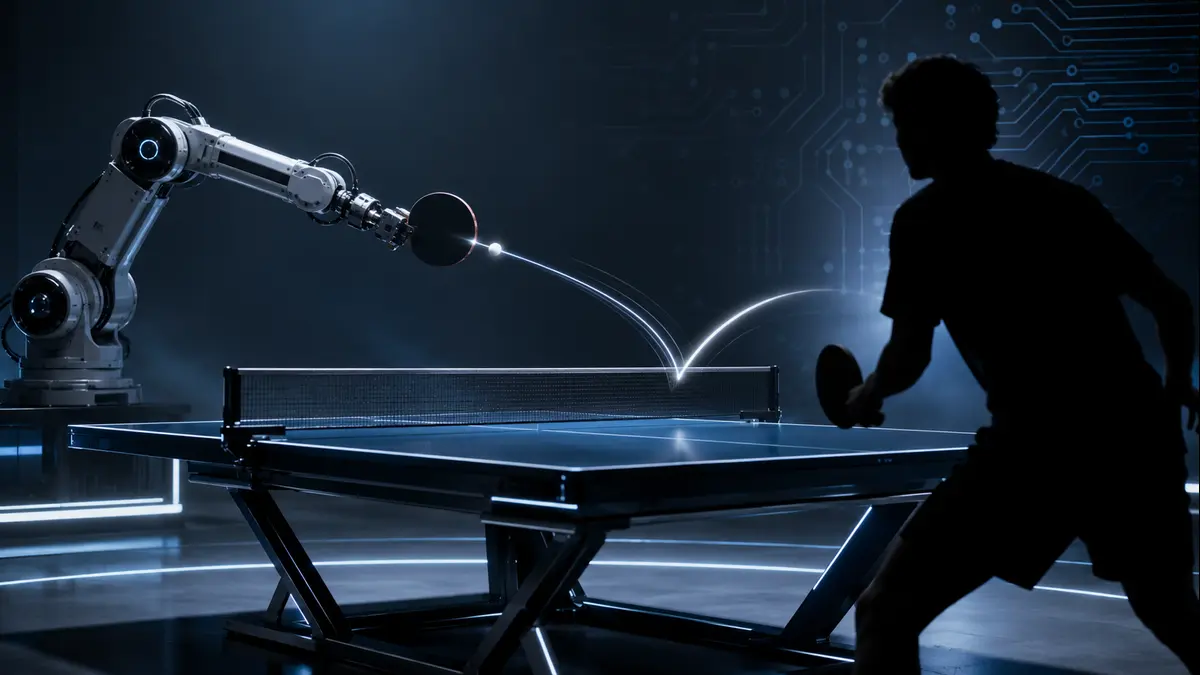

Basic AI assistants answer questions. Agentic systems perform tasks. They need memory, planning, tool use, workflow continuity, environment access, and repeated reasoning. This makes them far more compute-hungry than traditional chat interactions.

Claude Code is one example of this direction. But the same pattern is emerging across finance agents, research agents, legal agents, customer support agents, and internal enterprise automation systems. Once AI moves from “generate an answer” to “complete a task,” usage intensity rises sharply.

This is where compute limits become product limits.

An agent that can only run for a short session cannot handle complex workflows. An agent that constantly stops because of capacity pressure cannot become a reliable co-worker. The infrastructure layer decides how much autonomy the product can actually support.

That is why this announcement deserves more attention than a normal partnership story. It is not simply about Claude Code becoming more generous for developers. It is about the physical foundations required for AI agents to become mainstream.

The Risks Behind the Infrastructure Boom

There is also a serious caution here.

Large AI data centers require enormous power, capital, land, chips, water, cooling, and grid coordination. The infrastructure race may improve AI products, but it also raises questions about energy use, local community impact, hardware supply chains, and market concentration.

Anthropic’s own announcement acknowledges that enterprise customers increasingly need in-region infrastructure for compliance and data residency. The company also says it is being intentional about where it adds capacity, especially in jurisdictions with legal and regulatory frameworks that support large-scale investment.

That is a very important element. AI infrastructure is not just a technical matter. It is becoming geopolitical, regulatory, and environmental. The companies that win the AI race will not only be those with better researchers. They will also be those that can navigate power markets, government relationships, local regulations, chip availability, and public trust.

In that sense, the AI industry is returning to an older business truth: technology may look weightless on the screen, but empires are built on supply chains.

Conclusion: The Future of AI Will Be Built in Data Centers

Anthropic’s SpaceX compute deal is important because it makes the invisible layer visible.

Users will notice higher Claude Code limits. Developers will appreciate fewer interruptions. Enterprises will see stronger capacity signals. But behind those improvements is a larger structural shift: frontier AI is becoming an infrastructure business.

The winners of this next phase will not be decided only by who writes the best research paper or releases the most impressive demo. They will be decided by who can combine model quality with compute access, energy strategy, hardware availability, deployment reliability, and product discipline.

Anthropic has made a clear move in that direction. By securing access to Colossus 1 capacity, expanding Claude Code limits, and diversifying its compute partnerships, the company is positioning itself for a market where demand for AI agents may grow faster than traditional cloud infrastructure can comfortably support.

The AI race is still about intelligence. But increasingly, intelligence needs a factory.

And in 2026, that factory is measured in GPUs, megawatts, data centers, and the ability to keep agents running when users actually need them.