xAI’s Grok 4.3 is more than a model upgrade. It highlights a larger shift in AI: cheaper reasoning, longer context windows, and the rise of agentic workflows built around cost-aware architecture.

The artificial intelligence industry has spent the last few years chasing a familiar prize: bigger models, higher benchmark scores, longer context windows, and more dramatic product demonstrations.

That race is not over. But it is changing.

With Grok 4.3, xAI is not simply introducing another large language model into an already crowded market. It is pushing a different question into the center of the AI conversation: what happens when advanced reasoning becomes cheaper, faster, and easier to route into real workflows?

That question matters more than it appears. In production AI systems, the most important model is not always the one with the highest headline score. It is the one that delivers the right balance of intelligence, latency, cost, tool use, reliability, and integration flexibility. A legal research workflow, a coding assistant, a sales agent, a document parser, and a creative media tool do not need the same intelligence layer.

Grok 4.3 arrives at a time when AI companies are shifting from model spectacle to model economics.

The early era was about proving that generative AI could work. The next era is about making it commercially usable at scale.

What xAI Has Launched With Grok 4.3

xAI’s own developer documentation now positions Grok 4.3 as the recommended model for most text-based use cases, including chat and coding. The company separates this from its dedicated image, video, and voice models, suggesting a broader platform strategy rather than a single-model product story. In simple terms, Grok 4.3 appears to be xAI’s main general-purpose intelligence layer, while Grok Imagine and Grok Voice serve specialized media workflows.

The model is also listed through OpenRouter with a one-million-token context window, support for text and image inputs, and pricing of $1.25 per million input tokens and $2.50 per million output tokens. OpenRouter lists Grok 4.3 as released on April 30, 2026, and describes it as suited for agentic workflows, instruction-following tasks, and applications requiring high factual accuracy.

This is important because a one-million-token context window changes the practical scope of what developers can attempt. It allows longer documents, larger codebases, deeper research sessions, and multi-step agent workflows to sit inside a single model interaction more comfortably than traditional short-context systems.

But context alone does not win the market. Cost does.

The Price Cut Is the Real Strategic Signal

The headline around Grok 4.3 is not only about its capability but xAI is making higher-end model usage cheaper.

Artificial Analysis reports that Grok 4.3 achieved a score of 53 on its Intelligence Index, while also offering roughly 40% lower input pricing and 60% lower output pricing compared with Grok 4.20 in its comparison. The analysis places Grok 4.3 on the intelligence-versus-cost Pareto frontier, which means it sits in a favorable position when both capability and price are evaluated together.

That matters deeply for developers and AI startups.

A model that performs well but is too expensive becomes a demo tool. A model that performs well and can be called repeatedly becomes infrastructure. The difference is not philosophical. It appears directly in monthly cloud bills, API invoices, customer margins, and product pricing.

For AI agents, this becomes even more serious. Agents do not usually call a model once. They break tasks into steps. They search, retrieve, summarize, reason, validate, and sometimes retry. A single user request can create a chain of model calls. When the model is expensive, agentic workflows become difficult to monetize. When the model cost falls, the business model becomes more practical.

This is why Grok 4.3 should be read as a pricing event as much as a model event.

Reasoning Capabilities Changes the Product Layer

Another notable shift is that Grok 4.3 is being described as a reasoning model where reasoning is always active. OpenRouter states that reasoning cannot be disabled or configured by effort level. xAI’s documentation also notes that reasoning tokens are billed like standard token types at the model’s rate.

This is a subtle but important design choice.

Some AI systems expose reasoning effort as a setting. Developers can choose lighter or deeper reasoning depending on the task. Grok 4.3 appears to make reasoning a default part of the model behavior. That can simplify development because the model is always expected to think through complex requests. But it also means developers must understand token usage carefully, especially when reasoning-heavy tasks are chained inside agents.

For businesses, this creates a new kind of tradeoff. Default reasoning may improve answer quality, especially for logic, coding, research, and multi-step tasks. But it also requires cost monitoring. A good product architecture cannot simply throw every request into the strongest reasoning path. It must decide when reasoning is necessary and when a simpler model, retrieval lookup, database query, or deterministic function is enough.

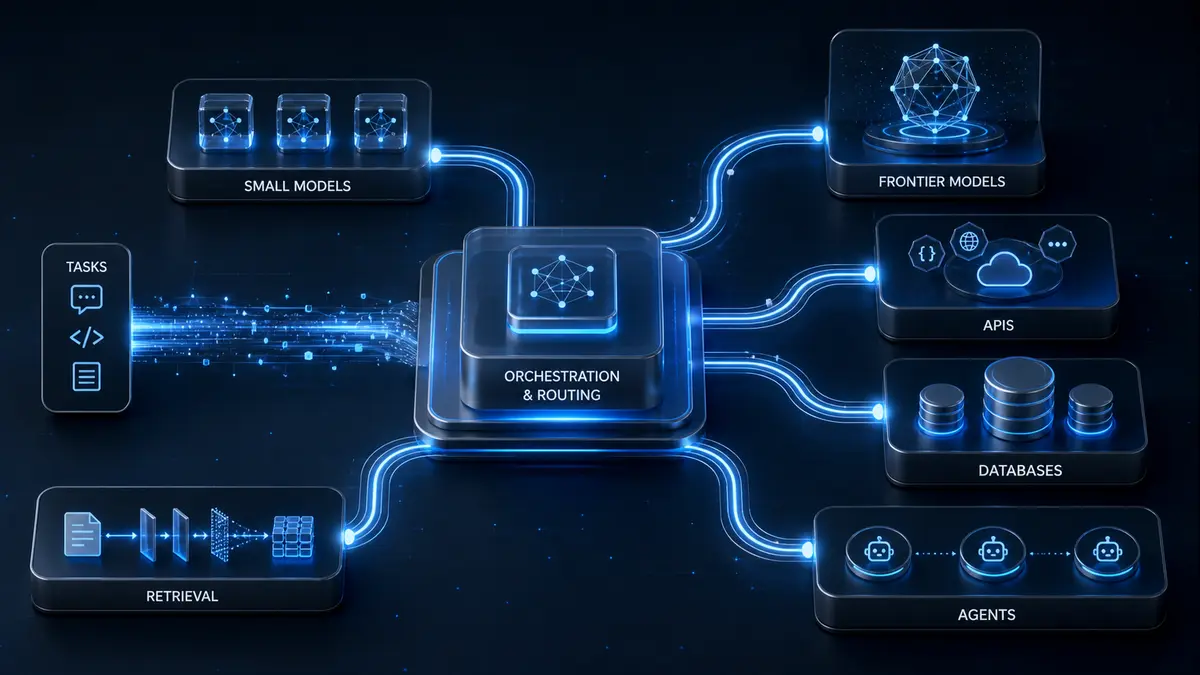

That is where modern AI architecture is heading: not one model for everything, but intelligent routing across models, tools, and memory systems.

Grok 4.3 Fits the Agentic AI Direction

The strongest use case for Grok 4.3 may not be casual chat. It may be agentic execution.

xAI’s broader developer ecosystem includes tools around file handling, collections, search, and code execution. Its documentation shows how developers can attach documents, build collections, and let models search across uploaded files. This places Grok 4.3 closer to the workflow layer: reading, reasoning, retrieving, and acting across structured and unstructured information.

That is where the AI market is moving.

A chatbot answers a question. An agent works through a task.

The difference is enormous. A chatbot can summarize a document. An agent can read ten documents, compare them, extract contradictions, prepare an output file, and call another tool. A chatbot can answer a coding doubt. An agent can inspect a repository, diagnose an issue, propose a patch, and produce a changelog.

This is why cost and context length are becoming strategic features. They are not just technical specifications. They decide how ambitious an AI workflow can become before the unit economics break.

Why This Matters for AI Startups

For AI startups, Grok 4.3 represents a wider industry lesson: the model layer is becoming more competitive, but the product layer is becoming more important.

When frontier models become cheaper, more companies can access advanced intelligence. That reduces the advantage of simply having access to a powerful model. The advantage shifts toward architecture.

The winners will be teams that know how to combine models with retrieval, routing, memory, user interfaces, workflow automation, payments, monitoring, and domain-specific datasets.

This is especially true for AI marketplaces, enterprise agents, research systems, and vertical AI products. A customer does not pay for a model name. A customer pays for a finished outcome: a report, an analysis, a decision, a dashboard, a workflow, a generated asset, or a solved operational problem.

Grok 4.3’s pricing could make it easier for developers to build these products. But it does not remove the need for careful engineering.

In fact, it makes architecture more important because cheap model access can encourage wasteful design. The best systems will still use the right tool for the right job.

A database lookup should not become an LLM call. A basic classification task should not always require deep reasoning. A high-risk financial, legal, or medical answer should not rely only on raw generation. Retrieval, validation, citations, and human review remain essential.

Old engineering wisdom still holds i.e using the simplest reliable system that solves the problem. More powerful models makes this rule more valuable.

The Benchmark Race Is Becoming a Business Model Race

Benchmarks remain useful. They help developers compare models across reasoning, coding, language, and tool-use tasks. But benchmarks do not tell the full story.

A model can perform well in a lab and still be hard to build with. It can be intelligent but slow. It can be cheap but inconsistent. It can support long context but struggle with grounded retrieval. It can generate impressive demos but fail under enterprise constraints.

That is why the Grok 4.3 launch should be interpreted through a practical lens. The strongest signal is not a single score. It is the combination of improved benchmark performance, lower pricing, long context, default reasoning, and agentic workflow positioning.

This combination pushes the market toward a more mature phase. AI companies are no longer only competing on intelligence. They are competing on deployability.

Can developers afford to use the model?

Can the model support long workflows?

Can it connect with files, tools, and APIs?

Can it produce reliable outputs at scale?

Can a company build a sustainable product margin on top of it?

These questions will define the next generation of AI products more than theatrical launch videos.

A More Competitive AI Market Is Good for Builders

Grok 4.3 also increases pressure on the broader AI market. OpenAI, Anthropic, Google, Meta, Mistral, DeepSeek, and other model providers are already competing across capability, price, latency, and ecosystem depth. xAI’s move adds more pressure to reduce costs while improving performance.

For builders, this is good news.

Lower model prices make experimentation cheaper. Longer context windows make more ambitious workflows possible. More competition reduces dependency on a single provider. Better routing options allow products to choose different models for different tasks.

But it also means AI startups must be careful with positioning. “We use a powerful model” is not a defensible business. Everyone can use a powerful model. The stronger claim is: “We built a system that turns models into reliable outcomes for a specific market.”

That is where value will be created.

Grok 4.3 Is a Sign of AI’s Infrastructure Phase

Grok 4.3 is not just another model update. It is a sign that the AI industry is entering a more infrastructure-driven phase.

The important story is not only that xAI has improved Grok’s performance. The important story is that advanced reasoning is becoming cheaper, larger-context workflows are becoming more accessible, and agentic systems are becoming central to product design.

This does not mean every AI product should immediately switch to Grok 4.3. Model choice should always depend on use case, reliability, latency, data policy, output quality, and cost. But Grok 4.3 clearly strengthens the argument that the future of AI products will be built around orchestration, not blind model selection.

The next great AI companies may not be the ones that call the biggest model every time. They may be the ones that know when not to.

And that is the real lesson from Grok 4.3: intelligence is becoming abundant, but good architecture is still rare.