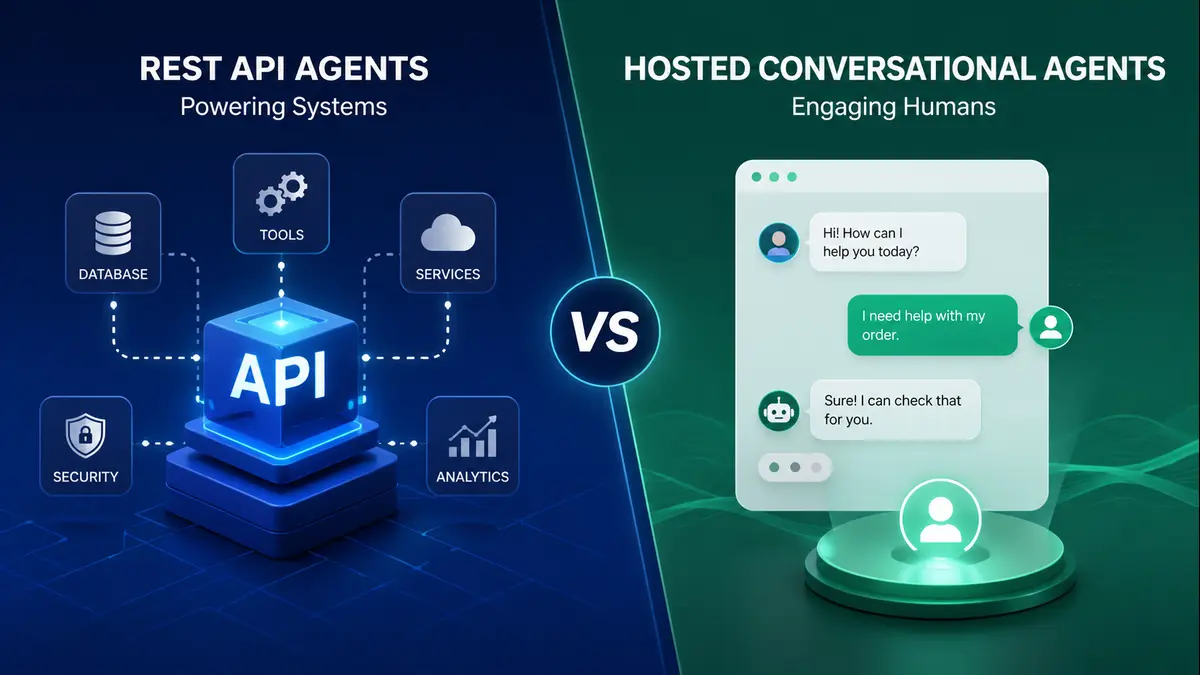

REST API Agents and Hosted Conversational Agents may use the same intelligence, but they monetize through entirely different business mechanics. Here is where the deeper long-term AI revenue is likely to emerge.

Artificial intelligence is no longer a novelty feature layered on top of software. It is rapidly becoming an operational layer of software itself. Across startups, enterprises, and consumer platforms, businesses are now confronting a far more practical question than whether AI works.

The real question is simple: what form of AI actually monetizes best?

Two dominant models are emerging in this new agent economy. On one side are REST API Agents – programmable autonomous systems exposed through structured endpoints, designed to perform tasks, trigger workflows, and integrate directly into backend applications. On the other are Hosted Conversational Agents — polished chat-first products that users interact with naturally through dialogue, memory, and iterative exchanges. Both are intelligent. Both can execute tasks. Both can be sold. But they do not make money in the same way, and that difference may define which AI businesses become infrastructure giants and which become temporary interface trends.

Two Very Different Paths to the Same Intelligence

REST API Agents treat artificial intelligence as a machine-consumable service.

A developer sends a structured request: a task, some context, a few tool permissions, perhaps a callback URL. The agent processes the instruction, invokes the required tools, validates outputs, and returns a clean machine-readable result. In more advanced systems, asynchronous webhooks, semantic caching layers, role-based access controls, and observability logs are built around this interaction to make the agent production safe.

This is intelligence designed to disappear quietly inside software. A finance company can deploy such an agent to validate invoices, reconcile payment mismatches, check policy compliance, and trigger downstream approvals without a human ever opening a chat window. The user may not even know an LLM was involved.

Hosted Conversational Agents operate in the opposite direction.

They sit in front of the user, not behind the backend. Here, the interaction begins with natural language. A customer types a problem, asks a question, expresses confusion, changes the requirement midway, and expects the system to understand. The hosted platform manages memory, clarification loops, safety controls, retrieval, and tool use beneath a conversational shell that feels smooth and human.

Here, the interaction begins with natural language. A customer types a problem, asks a question, expresses confusion, changes the requirement midway, and expects the system to understand. The hosted platform manages memory, clarification loops, safety controls, retrieval, and tool use beneath a conversational shell that feels smooth and human. No APIs are visible. No payloads are exposed. No structured requests are required.

One model feels like programmable infrastructure(REST API).The other feels like an intelligent product(Hosted Conversational Agents) .That distinction sounds cosmetic at first. It is not. It fundamentally changes how revenue is generated.

Where REST API Agents Win on Technical Design

REST API Agents are built for environments where precision matters more than personality.

Their greatest strength is composability. Because they expose intelligence through standard HTTP patterns, they can slot directly into microservice architectures, workflow engines, ERP systems, internal dashboards, and third-party applications. Logging is cleaner. Rate limiting is easier. Gateway-level security remains familiar. Teams can audit every invocation, monitor failure states, and build deterministic validation layers around probabilistic LLM behavior.

This matters enormously in enterprise deployments. A backend automation workflow does not care whether the agent sounds empathetic. It cares whether the output was correct, whether the anomaly was caught, and whether the action was logged.

That is why REST-based agent systems are becoming attractive for:

- financial operations,

- supply chain reconciliation,

- DevOps monitoring,

- autonomous document processing,

- compliance checking,

- internal enterprise orchestration.

They also align neatly with emerging machine-to-machine standards. Protocols such as MCP (Model Context Protocol) and the early evolution of A2A communication are making agent discovery, tool registration, and chained execution far more standardized than the ad hoc prompt wiring seen in first-generation AI products.

In short, REST agents are increasingly behaving less like chatbots and more like intelligent middleware. And middleware, historically, makes very serious money when embedded deeply enough.

Why Hosted Conversational Agents Continue to Dominate User Adoption

Hosted Conversational Agents win in an entirely different arena: accessibility. Human beings are messy communicators. They do not always provide structured requirements. They ramble, omit context, ask follow-up questions, contradict themselves, and often decide what they want only after seeing an initial answer. Conversational systems handle that ambiguity better than rigid API calls ever can. A customer support user does not say: “POST /resolve-ticket with structured issue taxonomy. ”He says: “My order is delayed, the refund has not come, and honestly I’m tired of explaining this.”

A hosted conversational agent can absorb emotion, ask clarifying questions, retrieve order data, trigger backend actions, and continue the interaction while maintaining continuity. This lowers friction dramatically for non-technical users and creates the illusion of a responsive assistant rather than a software process.

That illusion is commercially powerful. Because users build habits around interfaces that feel easy. This is precisely why hosted systems are seeing rapid adoption in:

- customer support,

- internal knowledge assistants,

- onboarding guides,

- AI copilots,

- sales qualification flows,

- consumer productivity subscriptions.

They reduce the training burden. They reduce the integration burden. Most importantly, they reduce the thinking burden for the user. And humans will always pay a premium for products that ask them to think less.

The Monetization Difference Is Much Larger Than It Appears

This is where the market begins to separate sharply. REST API Agents monetize through units of work.

A provider can charge:

- per API call,

- per successful tool execution,

- per processed document,

- per thousand autonomous operations,

- or through enterprise automation tiers tied to throughput and concurrency.

This model is measurable. Every invocation can be priced. Every SLA can be attached to a technical metric. Every increase in automation directly increases usage revenue .It resembles payment infrastructure economics. No one gets emotionally attached to a payment gateway either, yet companies like Stripe became massive because every transaction quietly produced monetizable backend value. REST API Agents operate with the same silent compounding effect. The more deeply they sit inside a business workflow, the harder they become to replace and the more predictably they bill.

Hosted Conversational Agents monetize through retention and pricing power.

Users typically pay through:

- monthly subscriptions,

- seat-based enterprise licenses,

- conversation credits,

- premium memory features,

- voice add-ons,

- or usage-based tiers layered on top of subscriptions.

The revenue per user can be substantial because hosted systems create recurring habit loops. People return daily, sometimes hourly, because the interface itself becomes part of their work rhythm. This gives hosted platforms one enormous advantage: direct customer intimacy. They are not just selling execution. They are selling experience.

However, hosted conversational systems also carry a dangerous cost variable. Long context windows, repeated clarification loops, unnecessary rambling, and user unpredictability can burn token costs rapidly. The prettier the conversation, the uglier the backend invoice can become if optimization is weak. So while hosted agents often scale faster in visible adoption, their margins can become more fragile than founders initially expect.

Real-World Revenue Reveals the Difference

Imagine two companies. The first sells a hosted AI support assistant to mid-sized ecommerce brands. Brands pay a monthly subscription because the assistant handles thousands of customer chats, reduces support headcount, and improves response time. This is a valuable business.

Now imagine a second company selling REST API-based autonomous finance operations to enterprise clients. Its agents reconcile invoices, detect mismatches across procurement systems, validate payouts, and trigger exception routing twenty-four hours a day. This second company may have fewer visible users.

But each deployment sits closer to hard business value. One reduces conversational burden. The other directly reduces financial leakage and labor cost. Enterprise budgets tend to reward the second category with larger contracts, longer lock-in, and deeper integration dependence. That is why the flashiest AI products are not always the ones building the deepest monetization moats Sometimes the most profitable intelligence is the intelligence nobody sees.

The Smartest Players Are Already Moving Toward Hybrids

The market is not standing still in pure categories, The most sophisticated AI companies are beginning to combine both models: a conversational front-end for discovery, explanation, and human interaction, powered by robust REST-based execution engines underneath. This architecture makes strategic sense. Let the user talk naturally. Let the backend execute ruthlessly. The conversation gathers ambiguity. The API layer handles determinism.

A sales manager may ask a hosted agent to “check which pending invoices are unusually delayed and suggest action.” The front-end manages dialogue. The backend REST agents perform ERP queries, anomaly detection, policy checks, and action recommendations.

To the user, it feels like one seamless AI assistant. In reality, it is two monetization engines stacked together. One captures user engagement. The other captures machine-scale operational billing. That combination may become the defining architecture of serious agent businesses over the next two years.

The Bigger Money Will Follow Measurable Automation

By 2027, hosted conversational agents will continue to dominate consumer subscriptions and light SMB deployments because they are easy to adopt, easy to understand, and easy to habitually use. But the larger enterprise spend is likely to flow elsewhere. It will move toward API-first autonomous systems embedded inside procurement, finance, logistics, customer operations, and industrial workflows where every completed machine action can be tied directly to a balance-sheet outcome.

Businesses admire elegant conversations. They pay premiums for measurable automation . That is the difference. The long-term winners in the AI agent economy will not merely build systems that sound intelligent. They will build systems that convert intelligence into billable units of operational value. Some will sell delightful conversations. The biggest ones will quietly sell invisible compounding infrastructure. And history, more often than not, has shown that infrastructure businesses tend to own the richest layer of every technology cycle.